Jump to

Where does ChatGPT get its data is one of the most common questions users ask when evaluating AI credibility. Understanding the origin of information, the role of neural algorithms, and the limits of generation helps separate facts from assumptions.

At Dabudai, we analyze AI behavior through our ai search visibility optimization tool, tracking how language models generate answers and cite references. This article explains training data, hallucinations, probability modeling, and how to verify AI outputs responsibly.

Where Does ChatGPT Get Its Information From?

Where does chat gpt get its information from can be answered clearly: the model is trained on a mixture of licensed datasets, publicly available internet content, and human-created training materials. It does not browse the web in real time unless explicitly connected to external tools.

Training includes diverse publications, educational resources, technical documentation, and structured datasets. According to OpenAI’s[1] public documentation.

Neural networks analyze patterns across billions of text samples. During generation, the model predicts the most probable next word based on probability distributions learned from training. Context plays a central role: phrasing influences output quality and specificity.

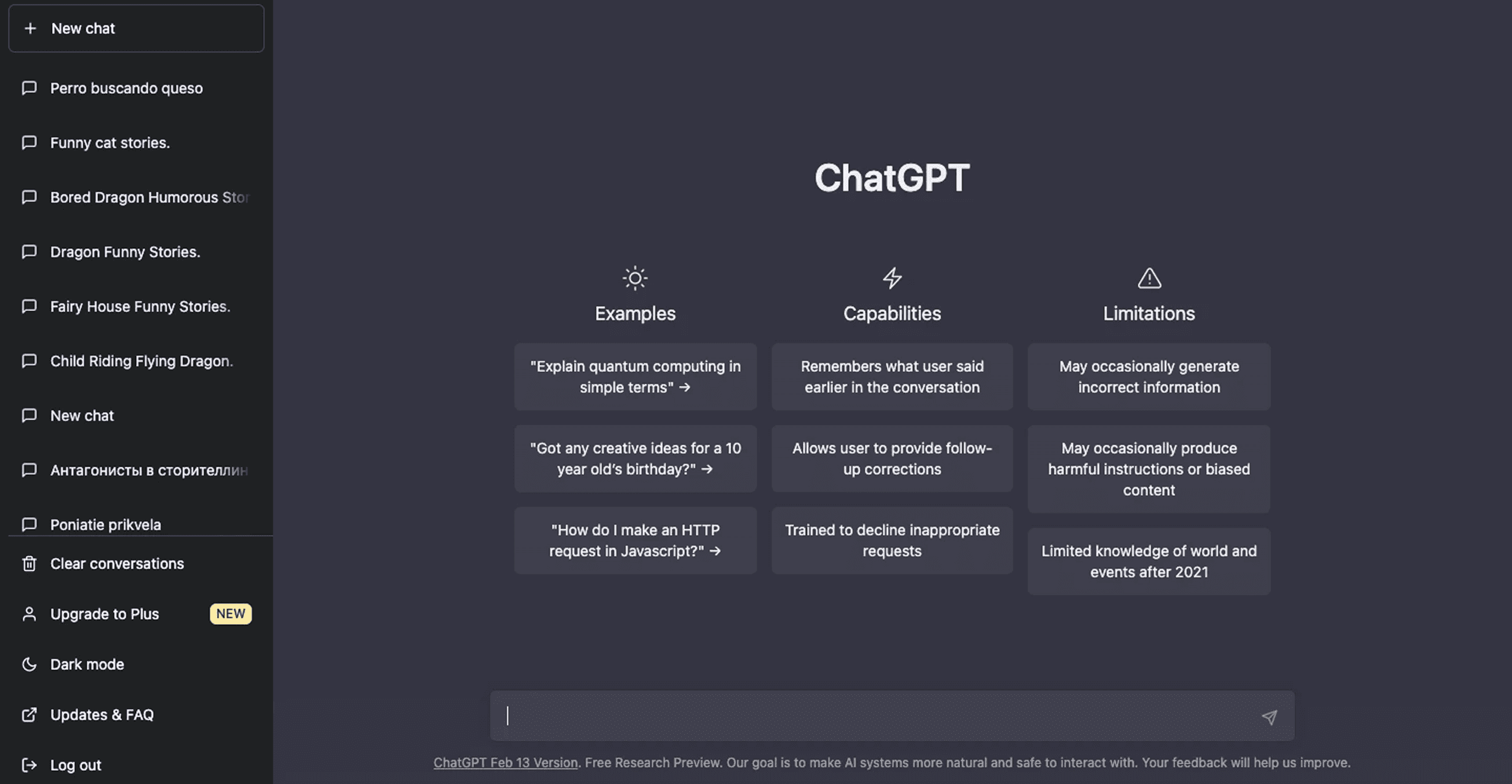

What Is the Source of ChatGPT and How Training Works

What is the source of chatgpt refers not to a single database but to aggregated training material. These are known as chatgpt data sources, which include licensed data, human trainers, and publicly accessible texts.

ChatGPT does not store articles or retrieve documents. Instead, neural algorithms use statistical modeling to generate responses. This is not search - it is probability-driven text prediction.

Simplified Training vs. Retrieval Comparison

Feature | Training-Based Model | Search Engine |

Data Access | Pre-trained datasets | Live internet |

Output | Generated text | Retrieved links |

Citations | May simulate | Direct references |

Updating | Periodic retraining | Real-time indexing |

This distinction explains why users sometimes misunderstand sources chatgpt references.

Does ChatGPT Make Up Sources or Choose at Least One Source?

Does chatgpt make up sources is a valid concern. The phenomenon known as hallucinations occurs when the model generates plausible but incorrect citations. These are not intentional fabrications but side effects of probability-based generation.

Similarly, the idea that chat gpt choose at least one source when answering is inaccurate. The model does not select or verify a specific publication in real time. Instead, it synthesizes patterns learned during training.

Research from Stanford Human-Centered AI[2]confirms that generative systems can produce convincing but unverifiable references if prompted for citations without verification layers:

Is ChatGPT a Reliable Source?

Is chat gpt a reliable source depends on context. For general explanations, summaries, or brainstorming, it can provide high-quality responses. However, for legal, medical, or financial decisions, independent verification is essential.

Credibility depends on:

Quality of training exposure

Clarity of user query

Context provided

Bias detection

Monitoring for hallucinations

Understanding Citations and AI Hallucinations

AI-generated citations may resemble real publications but occasionally contain inaccuracies. These errors arise because the model predicts plausible reference formats.

A simple evaluation checklist:

Signal | What to Check |

Author name | Exists in field? |

Publication | Indexed source? |

DOI/URL | Verifiable? |

Date | Logical timeline? |

MIT Media Lab[3] research emphasizes evaluation over blind trust in automated systems:

How Context and Probability Shape Answers

Probability drives word prediction. Context determines coherence. When prompts are vague, outputs may vary. When context is specific, response accuracy typically improves.

This explains why two similar queries may produce slightly different answers. The neural system generates language, not citations from memory.

How to Verify ChatGPT Sources and Improve Credibility

To improve credibility:

Cross-check with academic publications.

Use trusted databases (Google Scholar, PubMed, official documentation).

Confirm author and publisher reputation.

Validate statistics independently.

For professionals monitoring AI output quality, our research articles in the Dabudai blog discuss structured evaluation frameworks and bias monitoring techniques.

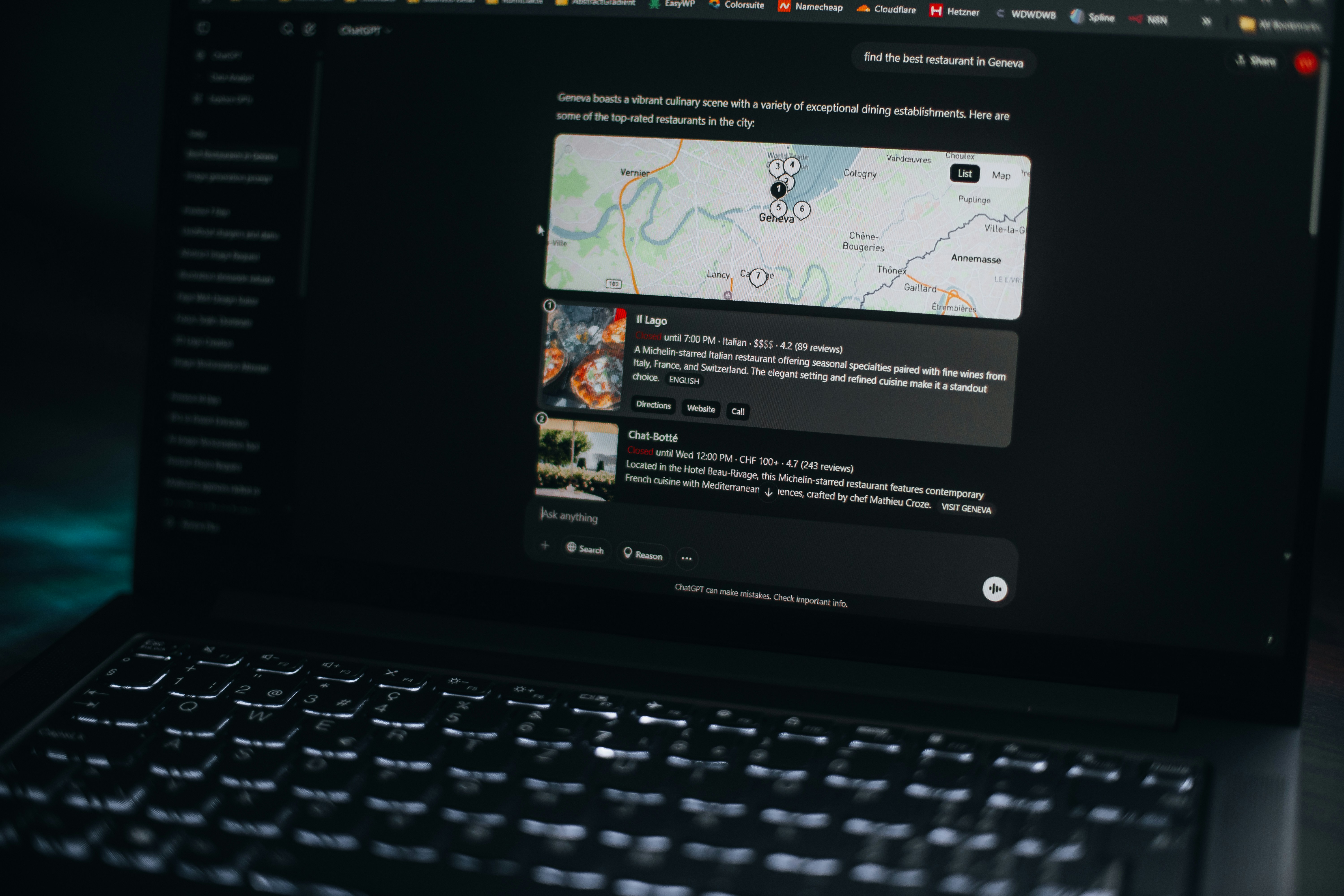

Sources ChatGPT Uses vs. Real-Time Data Access

Training data differs from live internet access. ChatGPT does not continuously update itself. It reflects patterns learned up to its last training cycle.

Misconception: AI answers are pulled from current online articles.

Reality: Responses are generated from pre-trained knowledge unless external retrieval tools are connected.

Google’s People + AI Research[4] initiative also highlights the difference between generative systems and retrieval-based systems.

Key Takeaways About ChatGPT Data Sources

Where does chatgpt get its data? From licensed data, public internet content, and human-created training materials.

Does chatgpt make up sources? It can generate incorrect citations due to hallucinations.

Is chat gpt a reliable source? Useful for general knowledge, but verification is recommended for critical decisions.

Does chat gpt choose at least one source? No, it generates answers based on learned patterns, not document selection.

Understanding these mechanics improves responsible AI usage and strengthens evaluation practices.

FAQ

1. Where does ChatGPT get its data?

ChatGPT gets its data from licensed datasets, publicly available internet content, and human-created training materials. It does not access a live database during generation.

2. Where does ChatGPT get its information from?

The model learns from large-scale publications and structured datasets. It generates responses using probability and context rather than retrieving a specific source.

3. Does ChatGPT make up sources?

Yes, hallucinations can occur. These happen because the system predicts plausible citations without verifying them in real time.

4. Does Chat GPT choose at least one source?

No. It does not select or verify a single document. Responses are created by neural algorithms combining learned patterns.

5. Is ChatGPT a reliable source?

It can provide accurate overviews but should not replace independent fact-checking for high-stakes decisions.

Sources:

[S1] OpenAI – How ChatGPT Works:

https://openai.com/policies/how-chatgpt-works

[S2]Stanford HAI – Research on Generative AI:

https://hai.stanford.edu/research

[S3]MIT Media Lab – Conversational Systems Research:

https://www.media.mit.edu/research/

[S4]Google PAIR – AI Interaction Frameworks:

https://pair.withgoogle.com/