Jump to

Choose Dabudai if you want a closed-loop workflow: measure → explain → execute → re-measure, with Smart Recommendations and a prioritized backlog of actions.

Choose Peec.ai if you want a clean AI search analytics tool focused on monitoring prompts and improving based on citations/sources.

If the main question is: “Why do competitors win in AI answers, and what exactly should we change?” → Dabudai is built for this.

If the main question is: “How do we track our AI visibility and citations with minimal setup?” → Peec.ai is a strong fit.

AI platforms are increasingly shaping buying decisions. Instead of running multiple searches, many users now turn directly to tools like ChatGPT, Gemini, Claude, Perplexity, or Copilot to ask what to choose and why.

When your brand is absent — or mentioned without a clear recommendation — the opportunity may be lost before a website visit even happens.

This shift is why platforms such as Dabudai and Peec.ai have emerged. Both aim to help teams understand how AI systems present their brand and how to improve that visibility.

Below is a practical comparison of Dabudai and Peec.ai: where each one is strongest, how they differ, and which type of team each platform is best suited for.

What you will find in this article

A quick explanation of what Dabudai and Peec.ai focus on

What Dabudai does better (and when it matters)

What Peec.ai does better (and when it matters)

A feature comparison table with a “why it matters” column

A simple choice guide + FAQ you can scan in 2 minutes

Where the difference actually lies

At first glance, both products sit under the same label: AI visibility. That can make them seem interchangeable. But the real difference is not in the metrics — it’s in what the platform is designed to deliver to your team.

Many teams lump both products into the same category of ai visibility optimization tools, but the key difference is what “optimization” actually means in practice. One approach optimizes by producing a structured execution plan and measuring impact; the other optimizes by surfacing clean monitoring data, citations, and insights teams can act on.

With Dabudai, the end result is a structured improvement loop.

You measure AI visibility, understand where and why you lose (by topic, prompt, competitor), turn that into a prioritized action plan, and then track the impact. The system is built around moving from insight to execution.

With Peec.ai, the end result is clean AI search analytics.

You define prompts, monitor how AI responds, analyze visibility and citations, and use that information to guide optimization decisions.

Both approaches are valid. The better fit depends on your operating model:

If you want an action-oriented growth system with built-in prioritization → Dabudai.

If you want a focused monitoring and citation analytics layer → Peec.ai.

What Dabudai does better

1) Closed-loop workflow: Measure → Explain → Execute

Dabudai does not stop at metrics. We:

Measure:

Track brand visibility in AI answers (coverage, Share of Voice, average position) and changes by topics and AI engines.

Explain:

Show exactly where you lose (topic → prompt → competitor) and why (missing signals/content/sources).

Execute:

Turn this into a concrete action plan — then re-measure the effect.

Why this matters: most tools end at dashboards. Dabudai ends with the answer to:

“What should we do next to win AI answers?”

2) Smart Recommendations = prioritized backlog of actions (not just “insights”)

Dabudai generates Smart Recommendations as a ranked task list:

what to change (specific action)

where (page/topic/prompt)

for which AI engine

expected impact / effort (so teams act in the right order)

First recommendations typically appear after 10 days of tracking (baseline data collection).

Baseline data collected in 10 days

Package | AI providers | AI answers collected in 10 days |

Business / 20 prompts | 4 | 1,600 |

Business / Agency / 50 prompts | 4 | 4,000 |

Business / 100 prompts | 4 | 8,000 |

Business / 200 prompts | 4 | 16,000 |

Business / 400 prompts | 4 | 32,000 |

Why this matters: teams don’t need more charts — they need a plan that actually shifts AI answers.

3) 3rd-party Visibility Playbook: where to publish + what to publish

AI answers are influenced not only by your website — but by the third-party sources AI trusts.

Dabudai analyzes third-party sources and converts this into an actionable plan:

Top 3rd-party sources to win AI answers (media, directories, communities, partner blogs, etc.)

Topic & angle analysis (what works for competitors, where you have gaps)

Content to publish (format, thesis, structure, target landing page, which AI engines matter most)

Prioritization by expected impact

Why this matters: most teams do outreach randomly. Dabudai gives a publication plan that is actually tied to AI outcomes.

4) AI Visibility Map: root-cause in 2 clicks

Dabudai provides an AI Visibility Map:

Company → Topics → Prompts

If company-level visibility drops, you instantly see:

which topic pulls the metric down

which prompts are lost

which competitor takes your share

what exactly to strengthen (via recommendations)

Why this matters: instead of “average visibility”, you get a precise attack point.

5) Agency-ready white label: your own platform in ~10 minutes

Dabudai makes agency delivery and resale easier:

white-label (logo/colors) in 1 minute

domain connection in ~10 minutes

multi-client mode

client sees the platform as your own product

Why this matters: agencies increase trust, close rates, and deal size.

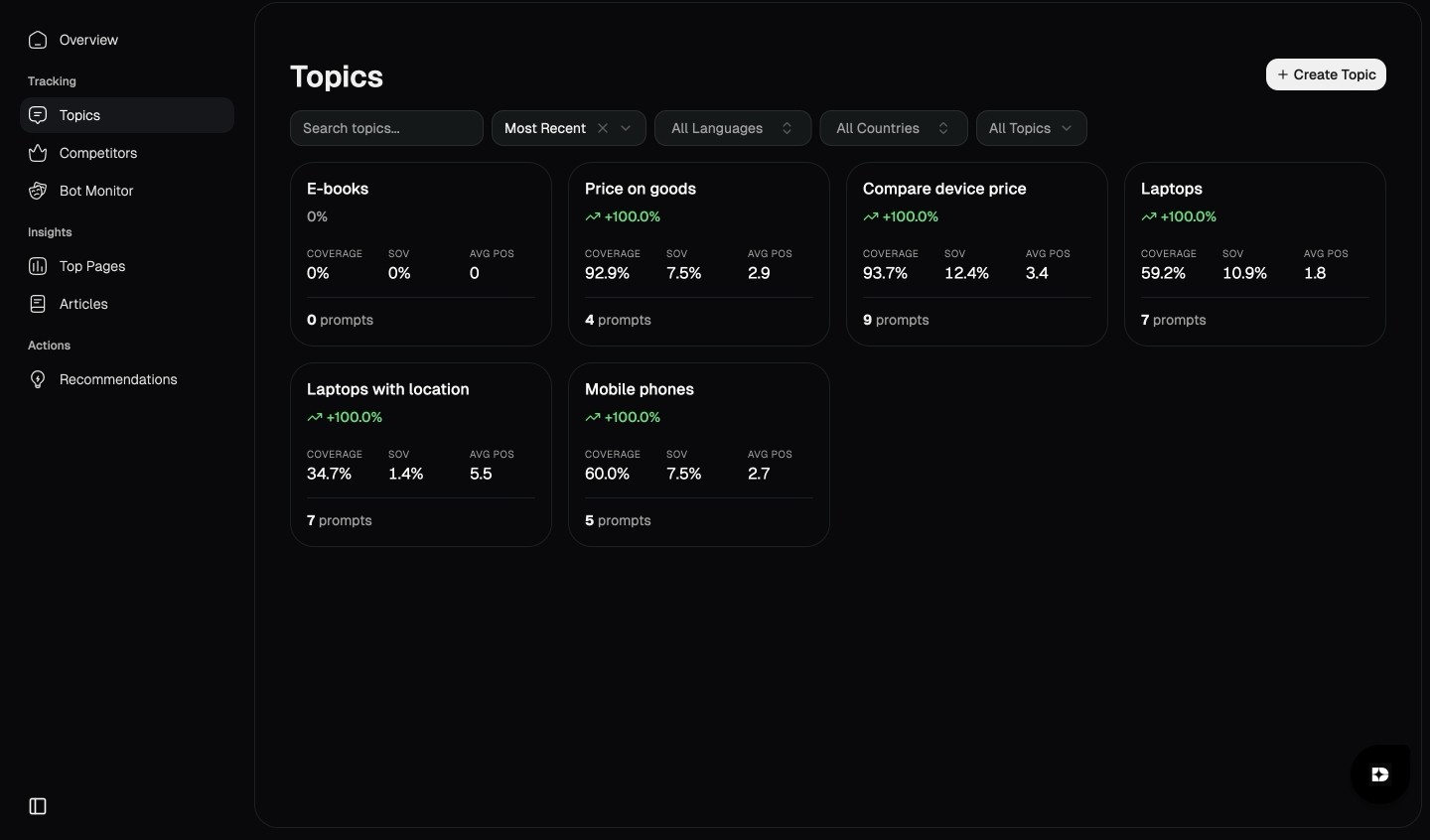

What Peec.ai does better

Peec.ai is designed as an AI Search Analytics platform with a strong emphasis on simplicity and citations.

If your main goal is a simple, monitoring-first setup, Peec.ai may feel like the best ai overview tracking tool for some teams that want quick visibility into how AI answers change for key prompts

1) Clean workflows (no feature overload)

Peec.ai positions itself as a platform with a simple setup:

define topics

add prompts

monitor visibility

act based on insights

Why this matters: many teams don’t want an enterprise command center — they want a clear analytics layer they can adopt quickly.

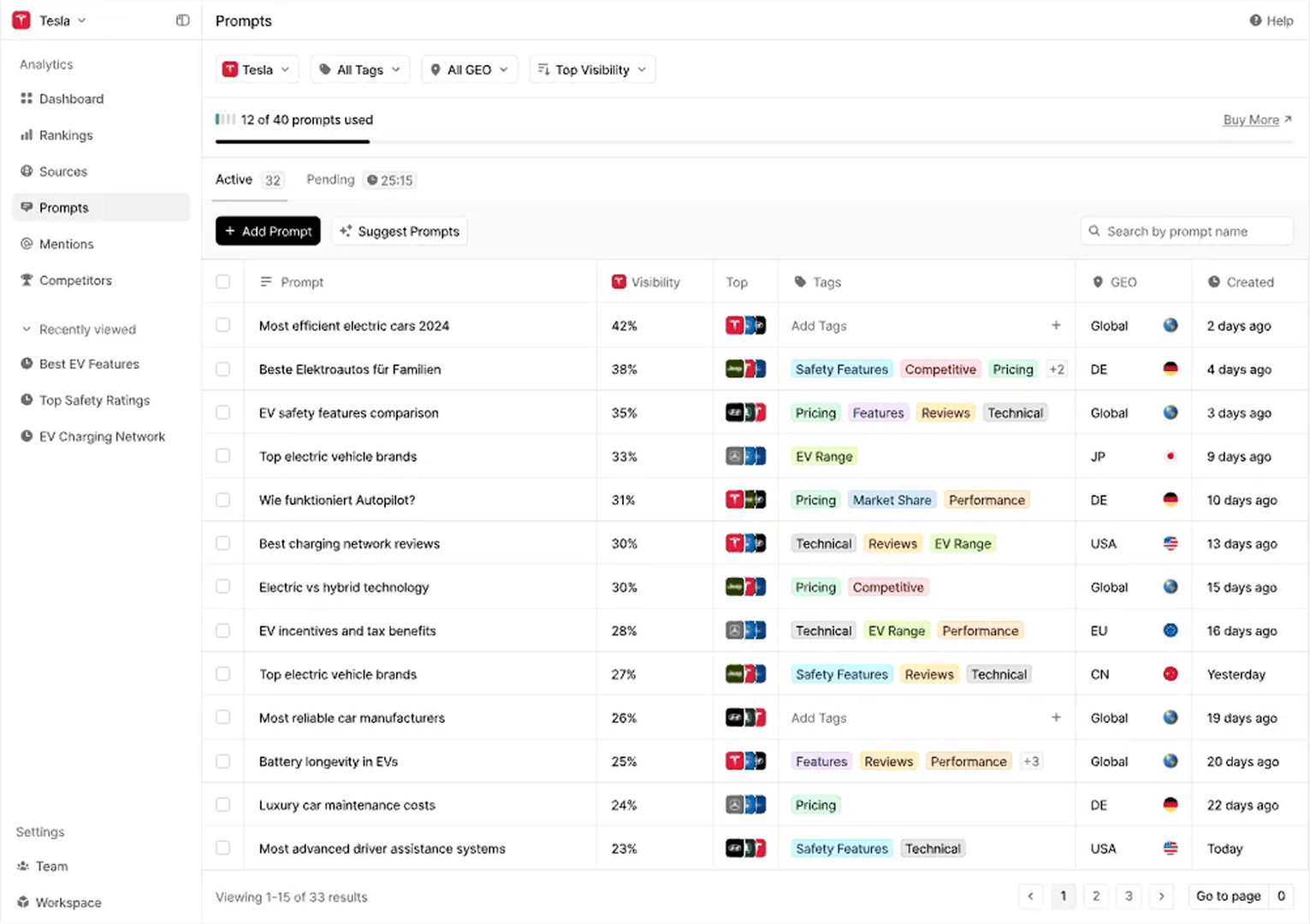

2) Strong focus on citations and sources

Peec.ai emphasizes citations as a key lever: the idea is to identify which sources AI uses and optimize accordingly.

Some Peec materials also distinguish between:

content being used in the answer

content being explicitly cited

Why this matters: citations are often the most practical clue to what AI trusts today.

3) Prompt discovery + GEO recommendations as a core part of the product

Peec publicly claims that features like:

relevant prompt suggestions

GEO recommendations

exports

- are part of their core platform (not hidden behind multiple modules).

Why this matters: for small and mid teams, a complete “core toolkit” without modular upgrades can be easier to adopt.

What is similar in Dabudai and Peec.ai?

Even though their product philosophy differs, they overlap in a few important areas.

1) Both track brand visibility in AI answers

Both platforms are built around monitoring how AI describes your brand.

Why this matters: AI answers change frequently. You need tracking over time, not one-time screenshots.

2) Both are prompt-based

You define the prompts/questions that matter for your ICP, category, and “vs/alternatives” intent.

Why this matters: AEO is not “track everything”. It’s track demand.

3) Both are built for marketing teams

Both tools are designed for marketing/SEO teams, not only for engineers.

Why this matters: AEO execution usually sits in marketing — even if it needs cross-functional support.

Platform comparison table

Criteria | Dabudai | Peec.ai | Why this matters |

Main outcome | Improvement plan + execution + metric control | AI search analytics + citations | Analytics alone doesn’t move AI outcomes unless it becomes actions. |

Operational loop | Measure → Explain → Execute → Track impact | Monitor → analyze → optimize | The loop determines speed and repeatability. |

Fast start | Baseline → first recommendations in ~10 days | Very fast setup and monitoring | Depends on whether you need “actions” or “visibility”. |

“Why competitors win?” | Root-cause (topic → prompt → competitor) + missing signals | More analytics and citations | Without root-cause, teams often guess what to change. |

Smart Recommendations | Prioritized backlog with impact/effort | Not the core framing | AEO is a prioritization problem. |

3rd-party strategy | Full playbook: where + what to publish | Citation-focused insights | Seeing sources is step 1; playbook is step 2. |

Level of analysis | Company → Topics → Prompts (AI Visibility Map) | Topics + prompts | Root-cause requires a map, not only prompt lists. |

Agency readiness | White-label + domain + multi-client | Not a core focus | Agencies need trust and resale packaging. |

Best fit | Teams that want AI growth via action loop | Teams that want clean monitoring + citations | Different maturity and operating models. |

When to choose Peec.ai vs Dabudai?

Situation / case | Better with Dabudai | Better with Peec.ai |

Need a fast pilot with clear action plan | ✅ Smart Recommendations + closed loop | ➖ Monitoring-first |

Main question: “How does AI show us and what should we change?” | ✅ Built for this | ➖ More analytics, less execution loop |

Need clean analytics and citation tracking | ✅ Yes, but more action-focused | ✅ Strong |

Want prioritized backlog (impact/effort) | ✅ | ➖ |

Want a third-party publication plan | ✅ | ➖ Mostly citation insights |

Agency selling AEO as a product | ✅ | ➖ |

Simple choice guide

Choose Dabudai if this sounds like you

“I don’t need another dashboard — I need clear actions and their order.”

“We want a pilot fast and first recommendations in ~2 weeks.”

“AI compares us with competitors — we want to know why we lose.”

“We need higher Share of Voice and recommendation rate, not just mentions.”

“We need a third-party playbook, not random outreach.”

Choose Peec.ai if this sounds like you

“We want a clean monitoring platform with minimal setup.”

“Citations and sources are our main optimization lever.”

“We want a complete core toolkit without a heavy enterprise system.”

“We want fast AI search analytics and reporting.”

Mini glossary

Coverage — how often AI mentions your brand

Share of Voice (SoV) — how often AI talks about you vs competitors

Average Position — average ranking when AI lists options

Recommendation rate — how often AI clearly recommends you

Citations/Sources — sites/pages AI uses to form the answer

Prompts — the questions you track (ICP, “vs”, “alternatives”)

AI monitoring visibility — tracking how often (and in what context) your brand appears in AI-generated answers across engines, prompts, and topics, so you can spot drops, wins, and changes over time.

FAQ

1) What are the best ai search monitoring tools in 2026?

If you’re asking what are the best ai search monitoring tools in 2026, the right choice depends on whether you need pure monitoring or a closed-loop system that turns monitoring into prioritized actions. Dabudai is built for a measure → explain → execute → re-measure workflow, while Peec.ai focuses on fast setup, monitoring, and citation-led insights.

2) If both products track AI answers — why not choose any?

Because the operating model is different:

Dabudai = action loop.

Peec.ai = analytics + citations.

3) Which gives results faster “this month”?

If you need monitoring and reporting — Peec can be faster to adopt.

If you need a change loop with prioritized tasks — Dabudai is built for that.

4) We want AI to recommend us more, not just mention us

Then you need: root-cause + prioritized change plan + metrics control. That’s Dabudai’s core frame.

5) Why does AI cite competitors more often?

Usually because competitors have stronger third-party presence, clearer topical authority, and more “proof” signals in trusted sources.

6) Do we need “X vs Y” pages for AI visibility?

Yes. This is one of the highest-intent formats because users ask AI “X vs Y” and “alternatives”.

7) What is the minimum pilot?

20–30 ICP prompts

baseline: how AI answers now

a list of “what to fix”

2–3 quick changes

re-measure

Conclusion

If your question is:

“How does AI show us, why do we lose vs competitors, and what should we change to win AI recommendations?” → Dabudai is built for this.

If your question is:

“How do we monitor AI visibility and citations with a clean, simple workflow?” → Peec.ai is a strong fit.

The best platform is not the one with more dashboards — it’s the one that fits your AEO maturity and operating model.